Proper study guides for Up to the minute Microsoft Perform Big Data Engineering on Microsoft Cloud Services (beta) certified begins with Microsoft 70-776 preparation products which designed to deliver the Certified 70-776 questions by making you pass the 70-776 test at your first time. Try the free 70-776 demo right now.

NEW QUESTION 1

You have a Microsoft Azure Data Factory that recently ran several activities in parallel. You receive alerts indicating that there are insufficient resources.

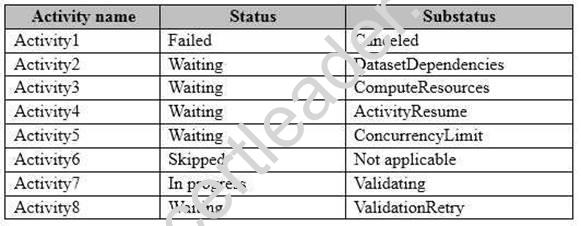

From the Activity Windows list in the Monitoring and Management app, you discover the statuses described in the following table.

Which activity cannot complete because of insufficient resources?

- A. Activity2

- B. Activity4

- C. Activity5

- D. Activity7

Answer: C

NEW QUESTION 2

Note: This question is part of a series of questions that present the same scenario. For your convenience, the scenario is repeated in each question. Each question presents a different goal and answer choices, but the text of the scenario is exactly the same in each question in this series.

Start of repeated scenario

You are migrating an existing on-premises data warehouse named LocalDW to Microsoft Azure. You will use an Azure SQL data warehouse named AzureDW for data storage and an Azure Data Factory named AzureDF for extract, transformation, and load (ETL) functions.

For each table in LocalDW, you create a table in AzureDW.

On the on-premises network, you have a Data Management Gateway.

Some source data is stored in Azure Blob storage. Some source data is stored on an on-premises Microsoft SQL Server instance. The instance has a table named Table1.

After data is processed by using AzureDF, the data must be archived and accessible forever. The archived data must meet a Service Level Agreement (SLA) for availability of 99 percent. If an Azure region fails, the archived data must be available for reading always. The storage solution for the archived data must minimize costs.

End of repeated scenario.

You need to configure an activity to move data from blob storage to AzureDW. What should you create?

- A. a pipeline

- B. a linked service

- C. an automation runbook

- D. a dataset

Answer: A

Explanation:

References:

https://docs.microsoft.com/en-us/azure/data-factory/v1/data-factory-azure-blob-connector

NEW QUESTION 3

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

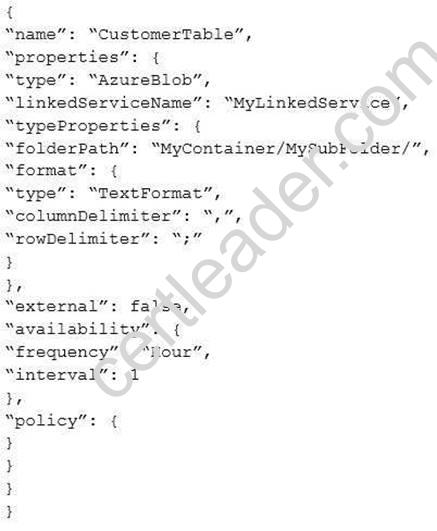

You are troubleshooting a slice in Microsoft Azure Data Factory for a dataset that has been in a waiting state for the last three days. The dataset should have been ready two days ago.

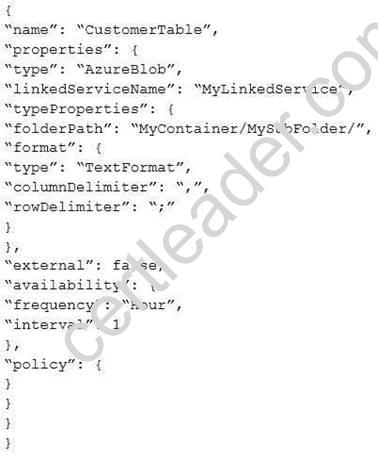

The dataset is being produced outside the scope of Azure Data Factory. The dataset is defined by using the following JSON code.

You need to modify the JSON code to ensure that the dataset is marked as ready whenever there is data in the data store.

Solution: You change the interval to 24.

Does this meet the goal?

- A. Yes

- B. No

Answer: B

Explanation:

References:

https://docs.microsoft.com/en-us/azure/data-factory/v1/data-factory-create-datasets

NEW QUESTION 4

You plan to use Microsoft Azure Data factory to copy data daily from an Azure SQL data warehouse to an Azure Data Lake Store.

You need to define a linked service for the Data Lake Store. The solution must prevent the access token from expiring.

Which type of authentication should you use?

- A. OAuth

- B. service-to-service

- C. Basic

- D. service principal

Answer: D

Explanation:

References:

https://docs.microsoft.com/en-gb/azure/data-factory/v1/data-factory-azure-datalake- connector#azure-data-lake-store-linked-service-properties

NEW QUESTION 5

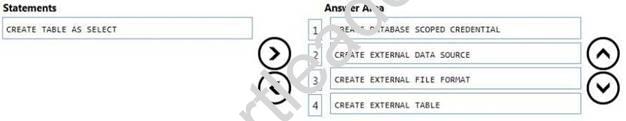

DRAG DROP

You have a Microsoft Azure SQL data warehouse.

You plan to reference data from Azure Blob storage. The data is stored in the GZIP compressed format. The blob storage requires authentication.

You create a master key for the data warehouse and a database schema.

You need to reference the data without importing the data to the data warehouse.

Which four statements should you execute in sequence? To answer, move the appropriate statements from the list of statements to the answer area and arrange them in the correct order.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

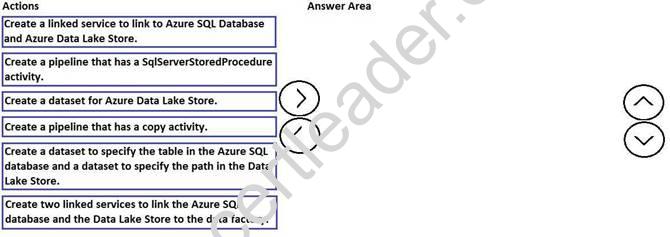

NEW QUESTION 6

DRAG DROP

You need to copy data from Microsoft Azure SQL Database to Azure Data Lake Store by using Azure Data Factory.

Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

References:

https://docs.microsoft.com/en-us/azure/data-factory/copy-activity-overview

NEW QUESTION 7

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You are troubleshooting a slice in Microsoft Azure Data Factory for a dataset that has been in a waiting state for the last three days. The dataset should have been ready two days ago.

The dataset is being produced outside the scope of Azure Data Factory. The dataset is defined by using the following JSON code.

You need to modify the JSON code to ensure that the dataset is marked as ready whenever there is data in the data store.

Solution: You add a structure property to the dataset.

Does this meet the goal?

- A. Yes

- B. No

Answer: B

Explanation:

References:

https://docs.microsoft.com/en-us/azure/data-factory/v1/data-factory-create-datasets

NEW QUESTION 8

Note: This question is part of a series of questions that present the same scenario. Each question in

the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You have a table named Table1 that contains 3 billion rows. Table1 contains data from the last 36 months.

At the end of every month, the oldest month of data is removed based on a column named DateTime.

You need to minimize how long it takes to remove the oldest month of data. Solution: You implement a columnstore index on the DateTime column. Does this meet the goal?

- A. Yes

- B. No

Answer: A

NEW QUESTION 9

DRAG DROP

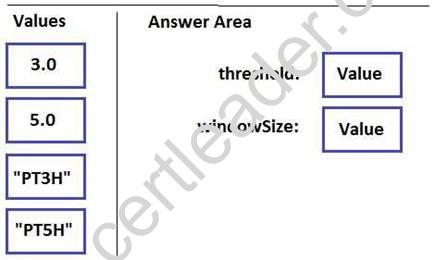

You plan to create for an alert for a Microsoft Azure Data Factory pipeline.

You need to configure the alert to trigger when the total number of failed runs exceeds five within a three-hour period.

How should you configure the window size and the threshold in the JSON file? To answer, drag the appropriate values to the correct targets. Each value may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

References:

https://docs.microsoft.com/en-us/azure/data-factory/v1/data-factory-monitor-manage-pipelines?view=powerbiapi-1.1.10

NEW QUESTION 10

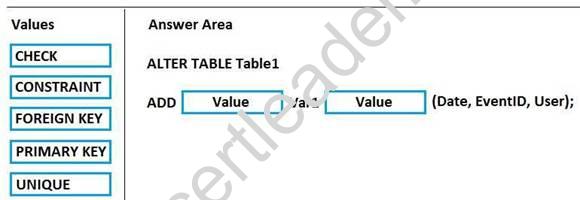

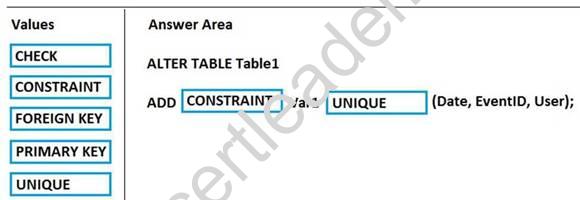

DRAG DROP

You use Microsoft Azure Stream Analytics to analyze data from an Azure event hub in real time and send the output to a table named Table1 in an Azure SQL database. Table1 has three columns named Date, EventID, and User.

You need to prevent duplicate data from being stored in the database.

How should you complete the statement? To answer, drag the appropriate values to the correct targets. Each value may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

NEW QUESTION 11

You have a Microsoft Azure Data Lake Analytics service. You plan to configure diagnostic logging.

You need to use Microsoft Operations Management Suite (OMS) to monitor the IP addresses that are used to access the Data Lake Store.

What should you do?

- A. Stream the request logs to an event hub.

- B. Send the audit logs to Log Analytics.

- C. Send the request logs to Log Analytics.

- D. Stream the audit logs to an event hub.

Answer: B

Explanation:

References:

https://docs.microsoft.com/en-us/azure/data-lake-analytics/data-lake-analytics-diagnostic-logs https://docs.microsoft.com/en-us/azure/security/azure-log-audit

NEW QUESTION 12

You are designing a solution that will use Microsoft Azure Data Lake Store.

You need to recommend a solution to ensure that the storage service is available if a regional outage occurs. The solution must minimize costs.

What should you recommend?

- A. Create two Data Lake Store accounts and copy the data by using Azure Data Factory.

- B. Create one Data Lake Store account that uses a monthly commitment package.

- C. Create one read-access geo-redundant storage (RA-GRS) account and configure a Recovery Services vault.

- D. Create one Data Lake Store account and create an Azure Resource Manager template that redeploys the services to a different region.

Answer: D

NEW QUESTION 13

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You are monitoring user queries to a Microsoft Azure SQL data warehouse that has six compute nodes.

You discover that compute node utilization is uneven. The rows_processed column from sys.dm_pdw_workers shows a significant variation in the number of rows being moved among the distributions for the same table for the same query.

You need to ensure that the load is distributed evenly across the compute nodes. Solution: You add a clustered columnstore index.

Does this meet the goal?

- A. Yes

- B. No

Answer: B

NEW QUESTION 14

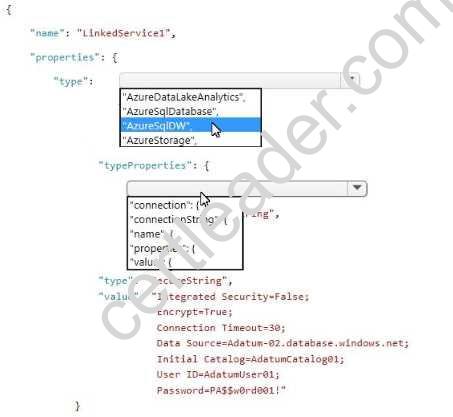

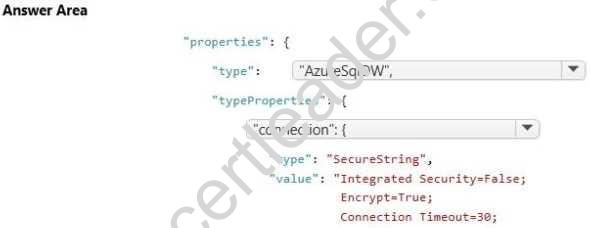

HOTSPOT

You have a Microsoft Azure Data Factory version 2 (V2) service.

You need to deploy a linked service to an existing database in Azure SQL Data Warehouse.

How should you complete the JSON snippet? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

NEW QUESTION 15

Note: This question is part of a series of questions that present the same scenario. Each question in

the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You are monitoring user queries to a Microsoft Azure SQL data warehouse that has six compute nodes.

You discover that compute node utilization is uneven. The rows_processed column from sys.dm_pdw_workers shows a significant variation in the number of rows being moved among the distributions for the same table for the same query.

You need to ensure that the load is distributed evenly across the compute nodes. Solution: You add a nonclustered columnstore index.

Does this meet the goal?

- A. Yes

- B. No

Answer: B

NEW QUESTION 16

You have a Microsoft Azure SQL data warehouse named DW1 that is used only from Monday to Friday.

You need to minimize Data Warehouse Unit (DWU) usage during the weekend. What should you do?

- A. From the Azure CLI, run the account set command.

- B. Run the ALTER DATABASE statement.

- C. Call the Create or Update Database REST API.

- D. Run the Suspend-AzureRmSqlDatabase Azure PowerShell cmdlet.

Answer: D

NEW QUESTION 17

You have a Microsoft Azure SQL data warehouse.

You need to configure Data Warehouse Units (DWUs) to ensure that you have six compute nodes. The solution must minimize costs.

Which value should set for the DWUs?

- A. DW200

- B. DW400

- C. DW600

- D. DW1000

Answer: C

Explanation:

References:

https://docs.microsoft.com/en-us/azure/sql-data-warehouse/sql-data-warehouse-manage-compute- overview

NEW QUESTION 18

You are developing an application by using the Microsoft .NET SDK. The application will access data from a Microsoft Azure Data Lake folder.

You plan to authenticate the application by using service-to-service authentication. You need to ensure that the application can access the Data Lake folder.

Which three actions should you perform? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

- A. Register an Azure Active Directory app that uses the Web app/API application type.

- B. Configure the application to use the application ID, authentication key, and tenant ID.

- C. Assign the Azure Active Directory app permission to the Data Lake Store folder.

- D. Configure the application to use the OAuth 2.0 token endpoint.

- E. Register an Azure Active Directory app that uses the Native application type.

- F. Configure the application to use the application ID and redirect URI.

Answer: ABC

Explanation:

References:

https://docs.microsoft.com/en-us/azure/data-lake-store/data-lake-store-service-to-service-authenticate-using-active-directory

NEW QUESTION 19

You are implementing a solution by using Microsoft Azure Data Lake Analytics. You have a dataset that contains data-related to website visits.

You need to combine overlapping visits into a single entry based on the timestamp of the visits. Which type of U-SQL interface should you use?

- A. IExtractor

- B. IReducer

- C. Aggregate

- D. IProcessor

Answer: C

NEW QUESTION 20

You are developing an application that uses Microsoft Azure Stream Analytics.

You have data structures that are defined dynamically.

You want to enable consistency between the logical methods used by stream processing and batch processing.

You need to ensure that the data can be integrated by using consistent data points. What should you use to process the data?

- A. a vectorized Microsoft SQL Server Database Engine

- B. directed acyclic graph (DAG)

- C. Apache Spark queries that use updateStateByKey operators

- D. Apache Spark queries that use mapWithState operators

Answer: D

NEW QUESTION 21

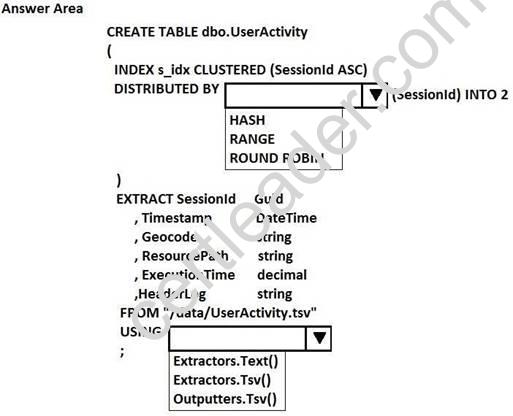

HOTSPOT

You have a Microsoft Azure Data Lake Analytics service.

You have a tab-delimited file named UserActivity.tsv that contains logs of user sessions. The file does not have a header row.

You need to create a table and to load the logs to the table. The solution must distribute the data by a column named SessionId.

How should you complete the U-SQL statement? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

References:

https://msdn.microsoft.com/en-us/library/mt706197.aspx

NEW QUESTION 22

You have a Microsoft Azure Data Lake Store and an Azure Active Directory tenant.

You are developing an application that will access the Data Lake Store by using end-user credentials. You need to ensure that the application uses end-user authentication to access the Data Lake Store. What should you create?

- A. a Native Active Directory app registration

- B. a policy assignment that uses the Allowed resource types policy definition

- C. a Web app/API Active Directory app registration

- D. a policy assignment that uses the Allowed locations policy definition

Answer: A

Explanation:

References:

https://docs.microsoft.com/en-us/azure/data-lake-store/data-lake-store-end-user-authenticate-using-active-directory

NEW QUESTION 23

You plan to use Microsoft Azure Event Hubs in Azure Stream Analytics to consume time-series

aggregations from several published data sources, such as IoT data, reference data, and social media. You expect several TB of data to be consumed daily. All the consumed data will be retained for one week.

You need to recommend a storage solution for the data. The solution must minimize costs. What should you recommend?

- A. Azure DocumentDB

- B. Azure Data Lake

- C. Azure Table Storage

- D. Azure Blob storage

Answer: B

NEW QUESTION 24

You have sensor devices that report data to Microsoft Azure Stream Analytics. Each sensor reports data several times per second.

You need to create a live dashboard in Microsoft Power BI that shows the performance of the sensor devices. The solution must minimize lag when visualizing the data.

Which function should you use for the time-series data element?

- A. LAG

- B. SlidingWindow

- C. System.TimeStamp

- D. TumblingWindow

Answer: D

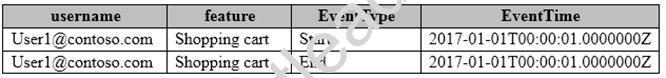

NEW QUESTION 25

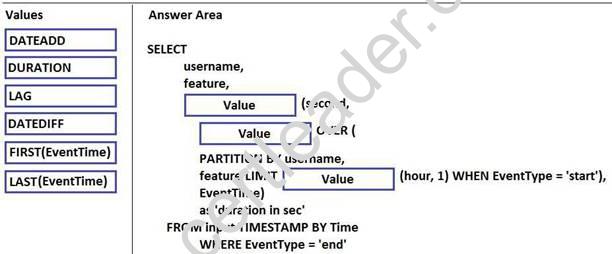

DRAG DROP

You have a Microsoft Azure Stream Analytics solution that captures website visits and user interactions on the website.

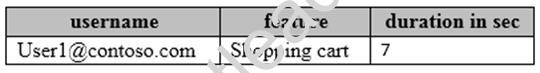

You have the sample input data described in the following table.

You have the sample output described in the following table.

How should you complete the script? To answer, drag the appropriate values to the correct targets. Each value may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

References:

https://docs.microsoft.com/en-us/azure/stream-analytics/stream-analytics-stream-analytics-query- patterns

NEW QUESTION 26

......

Thanks for reading the newest 70-776 exam dumps! We recommend you to try the PREMIUM Certleader 70-776 dumps in VCE and PDF here: https://www.certleader.com/70-776-dumps.html (91 Q&As Dumps)