100% Guarantee of DOP-C01 exam cost materials and testing engine for Amazon-Web-Services certification for IT specialist, Real Success Guaranteed with Updated DOP-C01 pdf dumps vce Materials. 100% PASS AWS Certified DevOps Engineer- Professional exam Today!

Online Amazon-Web-Services DOP-C01 free dumps demo Below:

NEW QUESTION 1

You are a Devops engineer for your company.There is a requirement to host a custom application which has custom dependencies for a development team. This needs to be done using AWS service. Which of the following is the ideal way to fulfil this requirement.

- A. Packagethe application and dependencies with Docker, and deploy the Docker containerwith CloudFormation.

- B. Packagethe application and dependencies with Docker, and deploy the Docker containerwith Elastic Beanstalk.

- C. Packagethe application and dependencies in an S3 file, and deploy the Docker containerwith Elastic Beanstalk.

- D. Packagethe application and dependencies with in Elastic Beanstalk, and deploy withElastic Beanstalk

Answer: B

Explanation:

The AWS Documentation mentions

Clastic Beanstalk supports the deployment of web applications from Docker containers. With Docker containers, you can define your own runtime environment. You can choose your own platform, programming language, and any application dependencies (such as package managers or tools), that aren't supported by other platforms. Docker containers are self-contained and include all the configuration information and software your web application requires to run.

For more information on Elastic beanstalk and Docker, please visit the below URL: http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/create_deploy_docker.html

NEW QUESTION 2

Which of the following is a reliable and durable logging solution to track changes made to your AWS resources?

- A. Createa new CloudTrail trail with one new S3 bucket to store the logs and with theglobal services option selecte

- B. Use 1AM roles S3 bucket policies and MultiFactor Authentication (MFA) Delete on the S3 bucket that stores your log

- C. V

- D. Createa new CloudTrail with one new S3 bucket to store the log

- E. Configure SNS tosend log file delivery notifications to your management syste

- F. Use 1AM rolesand S3 bucket policies on the S3 bucket that stores your logs.

- G. Createa new CloudTrail trail with an existing S3 bucket to store the logs and withthe global services option selecte

- H. Use S3 ACLs and Multi FactorAuthentication (M FA) Delete on the S3 bucket that stores your logs.

- I. Createthree new CloudTrail trails with three new S3 buckets to store the logs one forthe AWS Management console, one for AWS SDKs and one for command line tools.Use 1AM roles and S3 bucket policies on the S3 buckets that store your logs.

Answer: A

Explanation:

AWS Identity and Access Management (1AM) is integrated with AWS CloudTrail, a sen/ice that logs AWS events made by or on behalf of your AWS account. CloudTrail logs authenticated AWS API calls and also AWS sign-in events, and collects this event information in files that are delivered to Amazon S3 buckets. You need to ensure that all services are included. Hence option B is partially correct.

Option B and D is wrong because it just adds an overhead for having 3 S3 buckets and SNS notifications.

For more information on Cloudtrail, please visit the below URL:

• http://docs.aws.a mazon.com/IAM/latest/UserGuide/cloudtrail-integration.htm I

NEW QUESTION 3

You need to scale an RDS deployment. You are operating at 10% writes and 90% reads, based on your logging. How best can you scale this in a simple way?

- A. Create a second master RDS instance and peer the RDS groups.

- B. Cache all the database responses on the read side with CloudFront.

- C. Create read replicas for RDS since the load is mostly reads.

- D. Create a Multi-AZ RDS installs and route read traffic to standby.

Answer: C

Explanation:

Amazon RDS Read Replicas provide enhanced performance and durability for database (DB) instances. This replication feature makes it easy to elastically scale out beyond the capacity constraints of a single DB Instance for read-heavy database workloads. You can create one or more replicas of a given source DB Instance and serve high-volume application read traffic from multiple copies of your data, thereby increasing aggregate read throughput. Read replicas can also be promoted when needed to become standalone DB instances.

Option A is invalid because you would need to maintain the synchronization yourself with a secondary instance.

Option B is invalid because you are introducing another layer unnecessarily when you already have read replica's Option D is invalid because you only use this for Standy's

For more information on Read Replica's, please refer to the below link: https://aws.amazon.com/rds/details/read-replicas/

NEW QUESTION 4

Your development team is developing a mobile application that access resources in AWS. The users accessing this application will be logging in via Facebook and Google. Which of the following AWS mechanisms would you use to authenticate users for the application that needs to access AWS resou rces

- A. Useseparate 1AM users that correspond to each Facebook and Google user

- B. Useseparate 1AM Roles that correspond to each Facebook and Google user

- C. UseWeb identity federation to authenticate the users

- D. UseAWS Policies to authenticate the users

Answer: C

Explanation:

The AWS documentation mentions the following

You can directly configure individual identity providers to access AWS resources using web identity federation. AWS currently supports authenticating users using web identity federation through several identity providers: Login with Amazon

Facebook Login

Google Sign-in For more information on Web identity federation please visit the below URL:

• http://docs.aws.amazon.com/sdk-for-javascript/v2/developer-guide/load ing-browser- credentials-federated-id.htm I

NEW QUESTION 5

You've been tasked with building out a duplicate environment in another region for disaster recovery purposes. Part of your environment relies on EC2 instances with preconfigured software. What steps would you take to configure the instances in another region? Choose the correct answer from the options below

- A. Createan AMI oftheEC2 instance

- B. CreateanAMIoftheEC2instanceandcopytheAMItothedesiredregion

- C. Makethe EC2 instance shareable among other regions through 1AM permissions

- D. Noneof the above

Answer: B

Explanation:

You can copy an Amazon Machine Image (AMI) within or across an AWS region using the AWS Management Console, the AWS command line tools or SDKs, or the Amazon CC2 API, all of which support the Copylmage action. You can copy both Amazon CBS-backed AM Is and instance store-backed AM Is. You can copy AMIs with encrypted snapshots and encrypted AMIs.

For more information on copying AMI's, please refer to the below link:

• http://docs.aws.amazon.com/AWSCC2/latest/UserGuide/CopyingAMIs.htTTil

NEW QUESTION 6

Management has reported an increase in the monthly bill from Amazon Web Services, and they are extremely concerned with this increased cost. Management has asked you to determine the exact cause of this increase. After reviewing the billing report, you notice an increase in the data transfer cost. How can you provide management with a better insight into data transfer use?

- A. Update your Amazon CloudWatch metrics to use five-second granularity, which will give better detailed metrics that can be combined with your billing data to pinpoint anomalies.

- B. Use Amazon CloudWatch Logs to run a map-reduce on your logs to determine high usage and data transfer.

- C. Deliver custom metrics to Amazon CloudWatch per application that breaks down application data transfer into multiple, more specific data points.D- Using Amazon CloudWatch metrics, pull your Elastic Load Balancing outbound data transfer metrics monthly, and include them with your billing report to show which application is causing higher bandwidth usage.

Answer: C

Explanation:

You can publish your own metrics to CloudWatch using the AWS CLI or an API. You can view statistical graphs of your published metrics with the AWS Management Console.

CloudWatch stores data about a metric as a series of data points. Each data point has an associated time stamp. You can even publish an aggregated set of data points called a statistic set.

If you have custom metrics specific to your application, you can give a breakdown to the management on the exact issue.

Option A won't be sufficient to provide better insights.

Option B is an overhead when you can make the application publish custom metrics Option D is invalid because just the ELB metrics will not give the entire picture

For more information on custom metrics, please refer to the below document link: from AWS http://docs.aws.amazon.com/AmazonCloudWatch/latest/monitoring/publishingMetrics.htmI

NEW QUESTION 7

When an Auto Scaling group is running in Amazon Elastic Compute Cloud (EC2), your application rapidly scales up and down in response to load within a 10-minute window; however, after the load peaks, you begin to see problems in your configuration management system where previously terminated Amazon EC2 resources are still showing as active. What would be a reliable and efficient way to handle the cleanup of Amazon EC2 resources within your configuration management system? Choose two answers from the options given below

- A. Write a script that is run by a daily cron job on an Amazon EC2 instance and that executes API Describe calls of the EC2 Auto Scalinggroup and removes terminated instances from the configuration management system.

- B. Configure an Amazon Simple Queue Service (SQS) queue for Auto Scaling actions that has a script that listens for new messages and removes terminated instances from the configuration management system.

- C. Use your existing configuration management system to control the launchingand bootstrapping of instances to reduce the number of moving parts in the automation.

- D. Write a small script that is run during Amazon EC2 instance shutdown to de-register the resource from the configuration management system.

Answer: AD

Explanation:

There is a rich brand of CLI commands available for Cc2 Instances. The CLI is located in the following link:

• http://docs.aws.a mazon.com/cli/latest/reference/ec2/

You can then use the describe instances command to describe the EC2 instances.

If you specify one or more instance I Ds, Amazon CC2 returns information for those instances. If you do not specify instance IDs, Amazon EC2 returns information for all relevant instances. If you specify an instance ID that is not valid, an error is returned. If you specify an instance that you do not own, it is not included in the returned results.

• http://docs.aws.a mazon.com/cli/latest/reference/ec2/describe-insta nces.html

You can use the CC2 instances to get those instances which need to be removed from the configuration management system.

NEW QUESTION 8

Which of the following are the basic stages of a CI/CD Pipeline. Choose 3 answers from the options below

- A. SourceControl

- B. Build

- C. Run

- D. Production

Answer: ABD

Explanation:

The below diagram shows the stages of a typical CI/CD pipeline

For more information on AWS Continuous Integration, please visit the below URL: https://da.wsstatic.com/whitepapers/DevOps/practicing-continuous-integration-continuous- delivery-on- AWS.pdf

NEW QUESTION 9

You are working with a customer who is using Chef Configuration management in their data center. Which service is designed to let the customer leverage existing Chef recipes in AWS?

- A. AmazonSimple Workflow Service

- B. AWSEIastic Beanstalk

- C. AWSCIoudFormation

- D. AWSOpsWorks

Answer: D

Explanation:

AWS OpsWorks is a configuration management service that helps you configure and operate applications of all shapes and sizes using Chef. You can define the application's architecture and the specification of each component including package installation, software configuration and resources

such as storage. Start from templates for common technologies like application servers and databases or build your own to perform any task that can be scripted. AWS OpsWorks includes automation to scale your application based on time or load and dynamic configuration to orchestrate changes as your environment scales.

For more information on Opswork, please visit the link:

• https://aws.amazon.com/opsworks/

NEW QUESTION 10

Your serverless architecture using AWS API Gateway, AWS Lambda, and AWS DynamoDB experienced a large increase in traffic to a sustained 3000 requests per second, and dramatically increased in failure rates. Your requests, during normal operation, last 500 milliseconds on average. Your DynamoDB table did not exceed 50% of provisioned throughput, and Table primary keys are designed correctly. What is the most likely issue?

- A. Your API Gateway deployment is throttling your requests.

- B. Your AWS API Gateway Deployment is bottleneckingon request (deserialization.

- C. You did not request a limit increase on concurrent Lambda function executions.

- D. You used Consistent Read requests on DynamoDB and are experiencing semaphore lock.

Answer: C

Explanation:

Every Lambda function is allocated with a fixed amount of specific resources regardless of the memory allocation, and each function is allocated with a fixed amount of code storage per function and per account.

By default, AWS Lambda limits the total concurrent executions across all functions within a given region to 1000.

For more information on Concurrent executions, please visit the below URL: http://docs.aws.amazon.com/lambda/latest/dg/concurrent-executions.htmI

NEW QUESTION 11

Your company has a set of resources hosted in AWS. Your IT Supervisor is concerned with the costs being incurred with the current set of AWS resources and wants to monitor the cost usage. Which of the following mechanisms can be used to monitor the costs of the AWS resources and also look at the possibility of cost optimization. Choose 3 answers from the options given below

- A. Usethe Cost Explorer to see the costs of AWS resources

- B. Createbudgets in billing section so that budgets are set beforehand

- C. Sendall logs to Cloudwatch logs and inspect the logs for billing details

- D. Considerusing the Trusted Advisor

Answer: ABD

Explanation:

The AWS Documentation mentions the following

1) For a quick, high-level analysis use Cost Explorer, which is a free tool that you can use to view graphs of your AWS spend data. It includes a variety of filters and preconfigured views, as well as forecasting capabilities. Cost Explorer displays data from the last 13 months, the current month, and the forecasted costs for the next three months, and it updates this data daily.

2) Consider using budgets if you have a defined spending plan for a project or service and you want to track how close your usage and costs are to exceeding your budgeted amount. Budgets use data from Cost Explorer to provide you with a quick way to see your usage-to-date and current estimated charges from AWS. You can also set up notifications that warn you if you exceed or are about to exceed your budgeted amount.

3) Visit the AWS Trusted Advisor console regularly. Trusted Advisor works like a customized cloud expert, analyzing your AWS environment and providing best practice recommendations to help you save money, improve system performance and reliability, and close security gaps.

For more information on cost optimization, please visit the below U RL:

• https://aws.amazon.com/answers/account-management/cost-optimization-monitor/

NEW QUESTION 12

Your company has multiple applications running on AWS. Your company wants to develop a tool that notifies on-call teams immediately via email when an alarm is triggered in your environment. You have multiple on-call teams that work different shifts, and the tool should handle notifying the correct teams at the correct times. How should you implement this solution?

- A. Create an Amazon SNS topic and an Amazon SQS queu

- B. Configure the Amazon SQS queue as a subscriber to the Amazon SNS topic.Configure CloudWatch alarms to notify this topic when an alarm is triggere

- C. Create an Amazon EC2 Auto Scaling group with both minimum and desired Instances configured to 0. Worker nodes in thisgroup spawn when messages are added to the queu

- D. Workers then use Amazon Simple Email Service to send messages to your on call teams.

- E. Create an Amazon SNS topic and configure your on-call team email addresses as subscriber

- F. Use the AWS SDK tools to integrate your application with Amazon SNS and send messages to this new topi

- G. Notifications will be sent to on-call users when a CloudWatch alarm is triggered.

- H. Create an Amazon SNS topic and configure your on-call team email addresses as subscriber

- I. Create a secondary Amazon SNS topic for alarms and configure your CloudWatch alarms to notify this topic when triggere

- J. Create an HTTP subscriber to this topic that notifies your application via HTTP POST when an alarm is triggere

- K. Use the AWS SDK tools to integrate your application with Amazon SNS and send messages to the first topic so that on-call engineers receive alerts.

- L. Create an Amazon SNS topic for each on-call group, and configure each of these with the team member emails as subscriber

- M. Create another Amazon SNS topic and configure your CloudWatch alarms to notify this topic when triggere

- N. Create an HTTP subscriber to this topic that notifies your application via HTTP POST when an alarm is triggere

- O. Use the AWS SDK tools to integrate your application with Amazon SNS and send messages to the correct team topic when on shift.

Answer: D

Explanation:

Option D fulfils all the requirements

1) First is to create a SNS topic for each group so that the required members get the email addresses.

2) Ensure the application uses the HTTPS endpoint and the SDK to publish messages Option A is invalid because the SQS service is not required.

Option B and C are incorrect. As per the requirement we need to provide notification to only those on-call teams who are working in that particular shift when an alarm is triggered. It need not have to be send to all the on-call teams of the company. With Option B & C, since we are not configuring the SNS topic for each on call team the notifications will be send to all the on-call teams. Hence these 2 options are invalid. For more information on setting up notifications, please refer to the below document link: from AWS http://docs.aws.amazon.com/AmazonCloudWatch/latest/monitoring/US_SetupSNS.html

NEW QUESTION 13

Which of the following is the default deployment mechanism used by Elastic Beanstalk when the application is created via Console or EBCLI?

- A. All at Once

- B. Rolling Deployments

- C. Rolling with additional batch

- D. Immutable

Answer: B

Explanation:

The AWS documentation mentions

AWS Elastic Beanstalk provides several options for how deployments are processed, including deployment policies (All at once. Rolling, Rolling with additional batch,

and Immutable) and options that let you configure batch size and health check behavior during deployments. By default, your environment uses rolling deployments

if you created it with the console or EB CLI, or all at once deployments if you created it with a different client (API, SDK or AWS CLI).

For more information on Elastic Beanstalk deployments, please refer to the below link:

• http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/using-features.rolling-version- deploy.html

NEW QUESTION 14

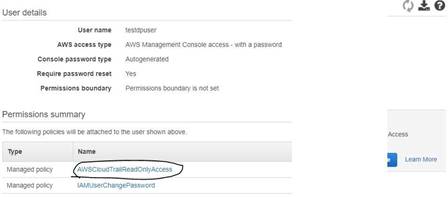

An audit is going to be conducted for your company's AWS account. Which of the following steps will ensure that the auditor has the right access to the logs of your AWS account

- A. Enable S3 and ELB log

- B. Send the logs as a zip file to the IT Auditor.

- C. Ensure CloudTrail is enable

- D. Create a user account for the Auditor and attach the AWSCLoudTrailReadOnlyAccess Policy to the user.

- E. Ensure that Cloudtrail is enable

- F. Create a user for the IT Auditor and ensure that full control is given to the userfor Cloudtrail.D- Enable Cloudwatch log

- G. Create a user for the IT Auditor and ensure that full control is given to the userfor the Cloudwatch logs.

Answer: B

Explanation:

The AWS Documentation clearly mentions the below

AWS CloudTrail is an AWS service that helps you enable governance, compliance, and operational and risk auditing of your AWS account. Actions taken by a user,

role, or an AWS service are recorded as events in CloudTrail. Events include actions taken in the AWS Management Console, AWS Command Line Interface, and AWS SDKs and APIs.

For more information on Cloudtrail, please visit the below URL:

• http://docs.aws.amazon.com/awscloudtrail/latest/userguide/cloudtrail-user-guide.html

NEW QUESTION 15

The development team has developed a new feature that uses an AWS service and wants to test it from inside a staging VPC. How should you test this feature with the fastest turnaround time?

- A. Launchan Amazon Elastic Compute Cloud (EC2) instance in the staging VPC in responseto a development request, and use configuration management to set up theapplicatio

- B. Run any testing harnesses to verify application functionality andthen use Amazon Simple Notification Service (SNS) to notify the developmentteam of the results.

- C. Usean Amazon EC2 instance that frequently polls the version control system todetect the new feature, use AWS CloudFormation and Amazon EC2 user data to runany testing harnesses to verify application functionality and then use AmazonSNS to notify the development team of the results.

- D. Usean Elastic Beanstalk application that polls the version control system todetect the new feature, use AWS CloudFormation and Amazon EC2 user data to runany testing harnesses to verify application functionality and then use AmazonKinesis to notify the development team of the results.

- E. UseAWS CloudFormation to launch an Amazon EC2 instance use Amazon EC2 user data torun any testing harnesses to verify application functionality and then useAmazon Kinesis to notify the development team of the results.

Answer: A

Explanation:

Using Amazon Kinesis would just take more time in setup and would not be ideal to notify the relevant team in the shortest time possible.

Since the test needs to be conducted in the staging VPC, it is best to launch the CC2 in the staging VPC.

For more information on the Simple Notification service, please visit the link:

• https://aws.amazon.com/sns/

NEW QUESTION 16

You currently have an application with an Auto Scalinggroup with an Elastic Load Balancer configured in AWS. After deployment users are complaining of slow response time for your application. Which of the following can be used as a start to diagnose the issue

- A. Use Cloudwatch to monitor the HealthyHostCount metric

- B. Use Cloudwatch to monitor the ELB latency

- C. Use Cloudwatch to monitor the CPU Utilization

- D. Use Cloudwatch to monitor the Memory Utilization

Answer: B

Explanation:

High latency on the ELB side can be caused by several factors, such as:

• Network connectivity

• ELB configuration

• Backend web application server issues

For more information on ELB latency, please refer to the below link:

• https://aws.amazon.com/premiumsupport/knowledge-center/elb-latency-troubleshooting/

NEW QUESTION 17

You work for an insurance company and are responsible for the day-to-day operations of your company's online quote system used to provide insurance quotes to members of the public. Your company wants to use the application logs generated by the system to better understand customer behavior. Industry, regulations also require that you retain all application logs for the system indefinitely in order to investigate fraudulent claims in the future. You have been tasked with designing a log management system with the following requirements:

- All log entries must be retained by the system, even during unplanned instance failure.

- The customer insight team requires immediate access to the logs from the past seven days.

- The fraud investigation team requires access to all historic logs, but will wait up to 24 hours before these logs are available.

How would you meet these requirements in a cost-effective manner? Choose three answers from the options below

- A. Configure your application to write logs to the instance's ephemeral disk, because this storage is free and has good write performanc

- B. Create a script that moves the logs from the instance to Amazon S3 once an hour.

- C. Write a script that is configured to be executed when the instance is stopped or terminated and that will upload any remaining logs on the instance to Amazon S3.

- D. Create an Amazon S3 lifecycle configuration to move log files from Amazon S3 to Amazon Glacier after seven days.

- E. Configure your application to write logs to the instance's default Amazon EBS boot volume, because this storage already exist

- F. Create a script that moves the logs from the instance to Amazon S3 once an hour.

- G. Configure your application to write logs to a separate Amazon EBS volume with the "delete on termination" field set to fals

- H. Create a script that moves the logs from the instance to Amazon S3 once an hour.

- I. Create a housekeeping script that runs on a T2 micro instance managed by an Auto Scaling group for high availabilit

- J. The script uses the AWS API to identify any unattached Amazon EBS volumes containing log file

- K. Your housekeeping script will mount the Amazon EBS volume, upload all logs to Amazon S3, and then delete the volume.

Answer: CEF

Explanation:

Since all logs need to be stored indefinitely. Glacier is the best option for this. One can use Lifecycle events to stream the data from S3 to Glacier

Lifecycle configuration enables you to specify the lifecycle management of objects in a bucket. The configuration is a set of one or more rules, where each rule

defines an action for Amazon S3 to apply to a group of objects. These actions can be classified as

follows:

• Transition actions - In which you define when objects transition to another storage class. For example, you may choose to transition objects to the STANDARDJA QK for infrequent access) storage class 30 days after creation, or archive objects to the GLACIER storage class one year after creation.

• Expiration actions - In which you specify when the objects expire. Then Amazon S3 deletes the expired objects on your behalf. For more information on Lifecycle events, please refer to the below link:

• http://docs.aws.a mazon.com/AmazonS3/latest/dev/object-lifecycle-mgmt.htm I You can use scripts to put the logs onto a new volume and then transfer those logs to S3.

Note:

Moving the logs from CBS volume to S3 we have some custom scripts running in the background. Inorder to ensure the minimum memory requirements for the OS and the applications for the script to execute we can use a cost effective ec2 instance.

Considering the computing resource requirements of the instance and the cost factor a tZmicro instance can be used in this case.

The following link provides more information on various t2 instances. https://docs.aws.amazon.com/AWSCC2/latest/WindowsGuide/t2-instances.html

Question is "How would you meet these requirements in a cost-effective manner? Choose three answers from the options below"

So here user has to choose the 3 options so that the requirement is fulfilled. So in the given 6 options, options C, C and F fulfill the requirement.

" The CC2s use CBS volumes and the logs are stored on CBS volumes those are marked for non- termination" - is one of the way to fulfill requirement. So this shouldn't be a issue.

NEW QUESTION 18

You have an Autoscaling Group which is launching a set of t2.small instances. You now need to replace those instances with a larger instance type. How would you go about making this change in an ideal manner?

- A. Changethe Instance type in the current launch configuration to the new instance type.

- B. Createanother Autoscaling Group and attach the new instance type.

- C. Createa new launch configuration with the new instance type and update yourAutoscaling Group.

- D. Changethe Instance type of the Underlying EC2 instance directly.

Answer: C

Explanation:

Answer - C

The AWS Documentation mentions

A launch configuration is a template that an Auto Scaling group uses to launch EC2 instances. When you create a launch configuration, you specify information for the instances such as the ID of the Amazon Machine Image (AMI), the instance type, a key pair, one or more security groups, and a block device mapping. If you've launched an EC2 instance before, you specified the same information in order to launch the instance. When you create an Auto Scalinggroup, you must specify a launch configuration. You can specify your launch configuration with multiple Auto Scaling groups.

However, you can only specify one launch configuration for an Auto Scalinggroup at a time, and you can't modify a launch configuration after you've created it.

Therefore, if you want to change the launch configuration for your Auto Scalinggroup, you must create a launch configuration and then update your Auto Scaling group with the new launch configuration.

For more information on launch configurations please see the below link:

• http://docs.aws.amazon.com/autoscaling/latest/userguide/l_au nchConfiguration.html

NEW QUESTION 19

Your application uses Amazon SQS and Auto Scaling to process background jobs. The Auto Scaling policy is based on the number of messages in the queue, with a maximum instance count of 100. Since the application was launched, the group has never scaled above 50. The Auto scaling group has now scaled to 100, the queue size is increasing and very few jobs are being completed. The number of messages being sent to the queue is at normal levels. What should you do to identity why the queue size is unusually high and to reduce it?

- A. Temporarily increase the AutoScaling group's desired value to 200. When the queue size has been reduced,reduce it to 50.

- B. Analyzethe application logs to identify possible reasons for message processingfailure and resolve the cause for failure

- C. V

- D. Createadditional Auto Scalinggroups enabling the processing of the queue to beperformed in parallel.

- E. AnalyzeCloudTrail logs for Amazon SQS to ensure that the instances Amazon EC2 role haspermission to receive messages from the queue.

Answer: B

Explanation:

Here the best option is to look at the application logs and resolve the failure. You could be having a functionality issue in the application that is causing the messages to queue up and increase the fleet of instances in the Autoscaling group.

For more information on centralized logging system implementation in AWS, please visit this link: https://aws.amazon.com/answers/logging/centralized-logging/

NEW QUESTION 20

You have a current Clouformation template defines in AWS. You need to change the current alarm threshold defined in the Cloudwatch alarm. How can you achieve this?

- A. Currently, there is no option to change what is already defined in Cloudformation templates.

- B. Update the template and then update the stack with the new templat

- C. Automatically all resources will be changed in the stack.

- D. Update the template and then update the stack with the new templat

- E. Only those resources that need to be changed will be change

- F. All other resources which do not need to be changed will remain as they are.

- G. Delete the current cloudformation templat

- H. Create a new one which will update the current resources.

Answer: C

Explanation:

Option A is incorrect because Cloudformation templates have the option to update resources.

Option B is incorrect because only those resources that need to be changed as part of the stack update are actually updated.

Option D is incorrect because deleting the stack is not the ideal option when you already have a change option available.

When you need to make changes to a stack's settings or change its resources, you update the stack instead of deleting it and creating a new stack. For example, if you

have a stack with an EC2 instance, you can update the stack to change the instance's AMI ID.

When you update a stack, you submit changes, such as new input parameter values or an updated template. AWS CloudFormation compares the changes you submit with the current state of your stack and updates only the changed resources

For more information on stack updates please refer to the below link:

• http://docs.aws.a mazon.com/AWSCIoudFormation/latest/UserGuide/using-cfn-updating- stacks.htmI

NEW QUESTION 21

You are a Devops Engineer for your company. You are responsible for creating Cloudformation templates for your company. There is a requirement to ensure that an S3 bucket is created for all

resources in development for logging purposes. How would you achieve this?

- A. Createseparate Cloudformation templates for Development and production.

- B. Createa parameter in the Cloudformation template and then use the Condition clause inthe template to create an S3 bucket if the parameter has a value of development

- C. Createan S3 bucket from before and then just provide access based on the tag valuementioned in the Cloudformation template

- D. Usethe metadata section in the Cloudformation template to decide on whether tocreate the S3 bucket or not.

Answer: B

Explanation:

The AWS Documentation mentions the following

You might use conditions when you want to reuse a template that can create resources in different contexts, such as a test environment versus a production environment In your template, you can add an CnvironmentType input parameter, which accepts either prod or test as inputs. For the production environment, you might include Amazon CC2 instances with certain capabilities; however, for the test environment, you want to use reduced capabilities to save money. With conditions, you can define which resources are created and how they're configured for each environment type.

For more information on Cloudformation conditions please visit the below url http://docs.aws.amazon.com/AWSCIoudFormation/latest/UserGuide/cond itions-section- structure.htm I

NEW QUESTION 22

You need to grant a vendor access to your AWS account. They need to be able to read protected messages in a private S3 bucket at their leisure. They also use AWS. What is the best way to accomplish this?

- A. Create an 1AM User with API Access Key

- B. Grant the User permissions to access the bucke

- C. Give the vendor the AWS Access Key ID and AWS Secret Access Key for the User.

- D. Create an EC2 Instance Profile on your accoun

- E. Grant the associated 1AM role full access to the bucke

- F. Start an EC2 instance with this Profile and give SSH access to the instance to the vendor.

- G. Create a cross-account I AM Role with permission to access the bucket, and grant permission to use the Role to the vendor AWS account.D- Generate a signed S3 PUT URL and a signed S3 PUT URL, both with wildcard values and 2 year duration

- H. Pass the URLs to the vendor.

Answer: C

Explanation:

You can use AWS Identity and Access Management (I AM) roles and AWS Security Token Service (STS) to set up cross-account access between AWS accounts. When you assume an 1AM role in another AWS account to obtain cross-account access to services and resources in that account, AWS CloudTrail logs the cross-account activity For more information on Cross Account Access, please visit the below URL:

• https://aws.amazon.com/blogs/security/tag/cross-account-access/

NEW QUESTION 23

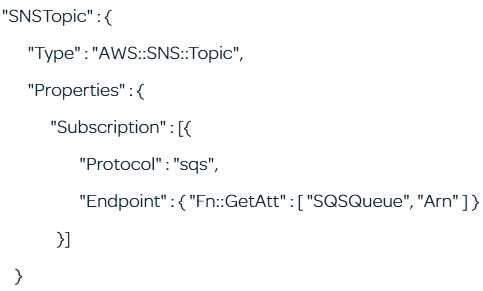

Explain what the following resource in a CloudFormation template does? Choose the best possible answer.

- A. Createsan SNS topic which allows SQS subscription endpoints to be added as a parameteron thetemplate

- B. Createsan SNS topic that allow SQS subscription endpoints

- C. Createsan SNS topic and then invokes the call to create an SQS queue with a logicalresource name of SQSQueue

- D. Creates an SNS topic and adds asubscription ARN endpoint for the SQS resource created under the logical nameSQSQueue

Answer: D

Explanation:

The intrinsic function Fn::GetAtt returns the value of an attribute from a resource in the template. This has nothing to do with adding parameters (Option A is wrong) or allowing endpoints (Option B is wrong) or invoking relevant calls (Option C is wrong)

For more information on Fn:: GetAtt function please refer to the below link

http://docs.aws.a mazon.com/AWSCIoudFormation/latest/UserGuide/intrinsic-function -reference- getatt.htm I

NEW QUESTION 24

Your company uses AWS to host its resources. They have the following requirements

1) Record all API calls and Transitions

2) Help in understanding what resources are there in the account

3) Facility to allow auditing credentials and logins

Which services would suffice the above requirements

- A. AWS Config, CloudTrail, 1AM Credential Reports

- B. CloudTrail, 1AM Credential Reports, AWS Config

- C. CloudTrail, AWS Config, 1AM Credential Reports

- D. AWS Config, 1AM Credential Reports, CloudTrail

Answer: C

Explanation:

You can use AWS CloudTrail to get a history of AWS API calls and related events for your account. This history includes calls made with the AWS Management

Console, AWS Command Line Interface, AWS SDKs, and other AWS services. For more information on Cloudtrail, please visit the below URL:

• http://docs.aws.a mazon.com/awscloudtrail/latest/userguide/cloudtrai l-user-guide.html

AWS Config is a service that enables you to assess, audit, and evaluate the configurations of your AWS resources. Config continuously monitors and records your AWS resource configurations and allows you to automate the evaluation of recorded configurations against desired configurations. With Config, you can review changes in configurations and relationships between AWS resources, dive into detailed resource configuration histories, and determine your overall compliance against the configurations specified in your internal guidelines. This enables you to simplify compliance auditing, security analysis, change management, and operational troubleshooting. For more information on the config service, please visit the below URL:

• https://aws.amazon.com/config/

You can generate and download a credential reportthat lists all users in your account and the status of their various credentials, including passwords, access keys, and MFA devices. You can get a credential report from the AWS Management Console, the AWS SDKs and Command Line Tools, or the 1AM API. For more information on Credentials Report, please visit the below URL:

• http://docs.aws.amazon.com/IAM/latest/UserGuide/id_credentials_getting-report.html

NEW QUESTION 25

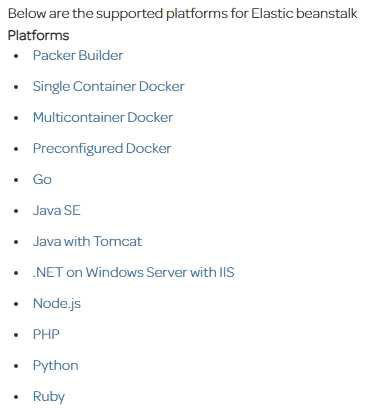

Which of the following is not a supported platform for the Elastic beanstalk service

- A. Java

- B. AngularJS

- C. PHP

- D. .Net

Answer: B

Explanation:

For more information on Elastic beanstalk, please visit the below URL:

http://docs.aws.a mazon.com/elasticbeanstalk/latest/dg/concepts.platforms. htm I

NEW QUESTION 26

......

Recommend!! Get the Full DOP-C01 dumps in VCE and PDF From Allfreedumps.com, Welcome to Download: https://www.allfreedumps.com/DOP-C01-dumps.html (New 116 Q&As Version)