We provide real DOP-C01 exam questions and answers braindumps in two formats. Download PDF & Practice Tests. Pass Amazon-Web-Services DOP-C01 Exam quickly & easily. The DOP-C01 PDF type is available for reading and printing. You can print more and practice many times. With the help of our Amazon-Web-Services DOP-C01 dumps pdf and vce product and material, you can easily pass the DOP-C01 exam.

Free demo questions for Amazon-Web-Services DOP-C01 Exam Dumps Below:

NEW QUESTION 1

You have deployed a Cloudformation template which is used to spin up resources in your account. Which of the following status in Cloudformation represents a failure.

- A. UPDATE_COMPLETE_CLEANUPJN_PROGRESS

- B. DELETE_COMPLETE

- C. ROLLBACK_IN_PROGRESS

- D. UPDATE_IN_PROGRESS

Answer: C

Explanation:

AWS Cloud Formation provisions and configures resources by making calls to the AWS services that are described in your template.

After all the resources have been created, AWS Cloud Formation reports that your stack has been created. You can then start using the resources in your stack. If

stack creation fails, AWS CloudFormation rolls back your changes by deleting the resources that it created.

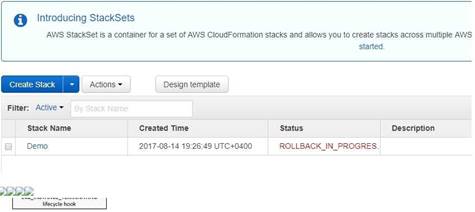

The below snapshot from Cloudformation shows what happens when there is an error in the stack creation.

For more information on how Cloud Formation works, please refer to the below link: http://docs.ws.amazon.com/AWSCIoudFormation/latest/UserGuide/cfn-whatis-howdoesitwork-html

NEW QUESTION 2

Your company currently has a set of EC2 Instances running a web application which sits behind an Elastic Load Balancer. You also have an Amazon RDS instance which is used by the web application. You have been asked to ensure that this arhitecture is self healing in nature and cost effective. Which of the following would fulfil this requirement. Choose 2 answers from the option given below

- A. UseCloudwatch metrics to check the utilization of the web laye

- B. Use AutoscalingGroup to scale the web instances accordingly based on the cloudwatch metrics.

- C. UseCloudwatch metrics to check the utilization of the databases server

- D. UseAutoscaling Group to scale the database instances accordingly based on thecloudwatch metrics.

- E. Utilizethe Read Replica feature forthe Amazon RDS layer

- F. Utilizethe Multi-AZ feature for the Amazon RDS layer

Answer: AD

Explanation:

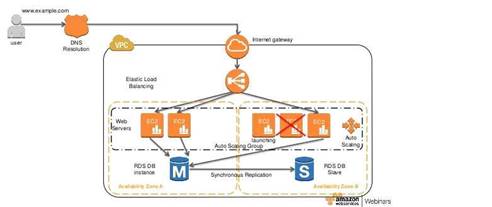

The following diagram from AWS showcases a self-healing architecture where you have a set of CC2 servers as Web server being launched by an Autoscaling Group.

The AWS Documentation mentions the following

Amazon RDS Multi-A2 deployments provide enhanced availability and durability for Database (DB) Instances, making them a natural fit for production database workloads. When you provision a Multi- AZ DB Instance, Amazon RDS automatically creates a primary DB Instance and synchronously replicates the data to a standby instance in a different Availability Zone (AZ). Cach AZ runs on its own physically distinct, independent infrastructure, and is engineered to be highly reliable. In case of an infrastructure failure, Amazon RDS performs an automatic failover to the standby (or to a read replica

in the case of Amazon Aurora), so that you can resume database operations as soon as the failover is complete. Since the endpoint for your DB Instance remains the same after a failover, your application can resume database operation without the need for manual administrative intervention. For more information on Multi-AZ RDS, please refer to the below link:

◆ https://aws.amazon.com/rds/details/multi-az/

NEW QUESTION 3

You need the absolute highest possible network performance for a cluster computing application. You already selected homogeneous instance types supporting 10 gigabit enhanced networking, made sure that your workload was network bound, and put the instances in a placement group. What is the last optimization you can make?

- A. Use 9001 MTU instead of 1500 for Jumbo Frames, to raise packet body to packet overhead ratios.

- B. Segregate the instances into different peered VPCs while keeping them all in a placement group, so each one has its own Internet Gateway.

- C. Bake an AMI for the instances and relaunch, so the instances are fresh in the placement group and do not have noisy neighbors.

- D. Turn off SYN/ACK on your TCP stack or begin using UDP for higher throughput.

Answer: A

Explanation:

Jumbo frames allow more than 1500 bytes of data by increasing the payload size per packet, and thus increasing the percentage of the packet that is not packet

overhead. Fewer packets are needed to send the same amount of usable data. However, outside of a given AWS region (CC2-Classic), a single VPC, or a VPC peering

connection, you will experience a maximum path of 1500 MTU. VPN connections and traffic sent over an Internet gateway are limited to 1500 MTU. If packets are over

1500 bytes, they are fragmented, or they are dropped if the Don't Fragment flag is set in the IP header.

For more information on Jumbo Frames, please visit the below URL: http://docs.aws.amazon.com/AWSCC2/latest/UserGuide/network_mtu.htm#jumbo_frame_instance s

NEW QUESTION 4

You work as a Devops Engineer for your company. There are currently a number of environments hosted via Elastic beanstalk. There is a requirement to ensure to ensure that the rollback time for a new version application deployment is kept to a minimal. Which elastic beanstalk deployment method would fulfil this requirement ?

- A. Rollingwith additional batch

- B. AllatOnce

- C. Blue/Green

- D. Rolling

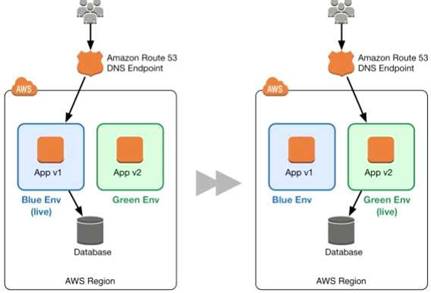

Answer: C

Explanation:

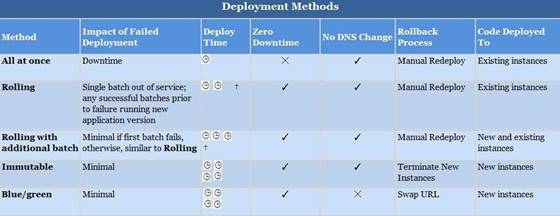

The below table from the AWS documentation shows that the least amount of time is spent in rollbacks when it comes to Blue Green deployments. This is because the only thing that needs to be done is for URL's to be swapped.

For more information on Elastic beanstalk deployment strategies, please visit the below URL: http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/using-features.deploy-existing-version, htmI

NEW QUESTION 5

What is web identity federation?

- A. Use of an identity provider like Google or Facebook to become an AWS1AM User.

- B. Use of an identity provider like Google or Facebook to exchange for temporary AWS security credentials.

- C. Use of AWS 1AM Usertokens to log in as a Google or Facebook user.

- D. Use STS service to create an user on AWS which will allow them to login from facebook orgoogle app.

Answer: B

Explanation:

With web identity federation, you don't need to create custom sign-in code or manage your own user identities. Instead, users of your app can sign in using a well-known identity provider (IdP) — such as Login with Amazon, Facebook, Google, or any other OpenID Connect (OIDC)-compatible IdP, receive an authentication token, and then exchange that token for temporary security credentials in AWS that map to an 1AM role with permissions to use the resources in your AWS account. Using an IdP helps you keep your AWS account secure, because you don't have to embed and distribute long- term security credentials with your application. For more information on Web Identity federation please refer to the below link:

http://docs^ws.amazon.com/IAM/latest/UserGuide/id_roles_providers_oidc.html

NEW QUESTION 6

Which of the following features of the Autoscaling Group ensures that additional instances are neither launched or terminated before the previous scaling activity takes effect

- A. Termination policy

- B. Cool down period

- C. Ramp up period

- D. Creation policy

Answer: B

Explanation:

The AWS documentation mentions

The Auto Scaling cooldown period is a configurable setting for your Auto Scaling group that helps to ensure that Auto Scaling doesn't launch or terminate additional

instances before the previous scaling activity takes effect. After the Auto Scaling group dynamically scales using a simple scaling policy. Auto Scaling waits for the

cooldown period to complete before resuming scaling activities. When you manually scale your Auto Scaling group, the default is not to wait for the cooldown period,

but you can override the default and honor the cooldown period. If an instance becomes unhealthy.

Auto Scaling does not wait for the cooldown period to complete before replacing the unhealthy instance

For more information on the Cool down period, please refer to the below URL:

• http://docs.ws.amazon.com/autoscaling/latest/userguide/Cooldown.htmI

NEW QUESTION 7

You have an application running on Amazon EC2 in an Auto Scaling group. Instances are being bootstrapped dynamically, and the bootstrapping takes over 15 minutes to complete. You find that instances are reported by Auto Scaling as being In Service before bootstrapping has completed. You are receiving application alarms related to new instances before they have completed bootstrapping, which is causing confusion. You find the cause: your application monitoring tool is polling the Auto Scaling Service API for instances that are In Service, and creating alarms for new previously unknown instances. Which of the following will ensure that new instances are not added to your application monitoring tool before bootstrapping is completed?

- A. Create an Auto Scaling group lifecycle hook to hold the instance in a pending: wait state until your bootstrapping is complet

- B. Once bootstrapping is complete, notify Auto Scaling to complete the lifecycle hook and move the instance into a pending:proceed state.

- C. Use the default Amazon Cloud Watch application metrics to monitor your application's healt

- D. Configure an Amazon SNS topic to send these Cloud Watch alarms to the correct recipients.

- E. Tag all instances on launch to identify that they are in a pending stat

- F. Change your application monitoring tool to look for this tag before adding new instances, and the use the Amazon API to set the instance state to 'pending' until bootstrapping is complete.

- G. Increase the desired number of instances in your Auto Scaling group configuration to reduce the time it takes to bootstrap future instances.

Answer: A

Explanation:

Auto Scaling lifecycle hooks enable you to perform custom actions as Auto Scaling launches or terminates instances. For example, you could install or configure

software on newly launched instances, or download log files from an instance before it terminates. After you add lifecycle hooks to your Auto Scaling group, they work as follows:

1. Auto Scaling responds to scale out events by launching instances and scale in events by terminating instances.

2. Auto Scaling puts the instance into a wait state (Pending:Wait orTerminating:Wait). The instance remains in this state until either you tell Auto Scaling to continue or the timeout period ends.

For more information on rolling updates, please visit the below link:

• http://docs.aws.amazon.com/autoscaling/latest/userguide/lifecycle-hooks.htm I

NEW QUESTION 8

You are building a Ruby on Rails application for internal, non-production use which uses MySQL as a database. You want developers without very much AWS experience to be able to deploy new code with a single command line push. You also want to set this up as simply as possible. Which tool is ideal for this setup?

- A. AWSCIoudFormation

- B. AWSOpsWorks

- C. AWS ELB+ EC2 with CLI Push

- D. AWS Elastic Beanstalk

Answer: D

Explanation:

With Elastic Beanstalk, you can quickly deploy and manage applications in the AWS Cloud without worrying about the infrastructure that runs those applications.

AWS Elastic Beanstalk reduces management complexity without restricting choice or control. You simply upload your application, and Elastic Beanstalk automatically handles the details of capacity provisioning, load balancing, scaling, and application health monitoring

Elastic Beanstalk supports applications developed in Java, PHP, .NET, Node.js, Python, and Ruby, as well as different container types for each language.

For more information on Elastic beanstalk, please visit the below URL:

• http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/Welcome.html

NEW QUESTION 9

You run a 2000-engineer organization. You are about to begin using AWS at a large scale for the first time. You want to integrate with your existing identity management system running on Microsoft Active Directory, because your organization is a power-user of Active Directory. How should you manage your AWS identities in the most simple manner?

- A. Use AWS Directory Sen/ice Simple AD.

- B. Use AWS Directory Service AD Connector.

- C. Use an Sync Domain running on AWS Directory Sen/ice.

- D. Use an AWS Directory Sync Domain running on AWS Lambda.

Answer: B

Explanation:

AD Connector is a directory gateway with which you can redirect directory requests to your on- premises Microsoft Active Directory without caching any information

in the cloud. AD Connector comes in two sizes, small and large. A small AD Connector is designed for smaller organizations of up to 500 users. A large AD Connector

can support larger organizations of up to 5,000 users. Once set up, AD Connector offers the following benefits:

• Your end users and IT administrators can use their existing corporate credentials to log on to AWS applications such as Amazon Workspaces, Amazon WorkDocs, or Amazon WorkMail.

• You can manage AWS resources like Amazon EC2 instances or Amazon S3 buckets through 1AM role-based access to the AWS Management Console.

• You can consistently enforce existing security policies (such as password expiration, password history, and account lockouts) whether users or IT administrators are accessing resources in your on- premises infrastructure or in the AWS Cloud.

• You can use AD Connector to enable multi-factor authentication by integrating with your existing RADIUS-based MFA infrastructure to provide an additional layer of security when users access AWS applications.

For more information on the AD Connector, please visit the below URL:

• http://docs.aws.amazon.com/directoryservice/latest/admin-guide/directory_ad_con nector.htm I

NEW QUESTION 10

You need to deploy a multi-container Docker environment on to Elastic beanstalk. Which of the following files can be used to deploy a set of Docker containers to Elastic beanstalk

- A. Dockerfile

- B. DockerMultifile

- C. Dockerrun.aws.json

- D. Dockerrun

Answer: C

Explanation:

The AWS Documentation specifies

A Dockerrun.aws.json file is an Clastic Beanstalk-specific JSON file that describes how to deploy a set of Docker containers as an Clastic Beanstalk application. You can use aDockerrun.aws.json file for a multicontainer Docker environment.

Dockerrun.aws.json describes the containers to deploy to each container instance in the environment as well as the data volumes to create on the host instance for the containers to mount.

For more information on this, please visit the below URL:

http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/create_deploy_docker_v2config.html

NEW QUESTION 11

Your application stores sensitive information on an EBS volume attached to your EC2 instance. How can you protect your information? Choose two answers from the options given below

- A. Unmount the EBS volume, take a snapshot and encrypt the snapsho

- B. Re-mount the Amazon EBS volume

- C. It is not possible to encrypt an EBS volume, you must use a lifecycle policy to transfer data to S3 forencryption.

- D. Copy the unencrypted snapshot and check the box to encrypt the new snapsho

- E. Volumes restored from this encrypted snapshot will also be encrypted.

- F. Create and mount a new, encrypted Amazon EBS volum

- G. Move the data to the new volum

- H. Delete the old Amazon EBS volume *t

Answer: CD

Explanation:

These steps are given in the AWS documentation

To migrate data between encrypted and unencrypted volumes

1) Create your destination volume (encrypted or unencrypted, depending on your need).

2) Attach the destination volume to the instance that hosts the data to migrate.

3) Make the destination volume available by following the procedures in Making an Amazon EBS Volume Available for Use. For Linux instances, you can create a mount point at /mnt/destination and mount the destination volume there.

4) Copy the data from your source directory to the destination volume. It may be most convenient to use a bulk-copy utility for this.

To encrypt a volume's data by means of snapshot copying

1) Create a snapshot of your unencrypted CBS volume. This snapshot is also unencrypted.

2) Copy the snapshot while applying encryption parameters. The resulting target snapshot is encrypted.

3) Restore the encrypted snapshot to a new volume, which is also encrypted.

For more information on EBS Encryption, please refer to the below document link: from AWS http://docs.aws.amazon.com/AWSEC2/latest/UserGuide/EBSEncryption.html

NEW QUESTION 12

You have an Autoscaling Group configured to launch EC2 Instances for your application. But you notice that the Autoscaling Group is not launching instances in the right proportion. In fact instances are being launched too fast. What can you do to mitigate this issue? Choose 2 answers from the options given below

- A. Adjust the cooldown period set for the Autoscaling Group

- B. Set a custom metric which monitors a key application functionality forthe scale-in and scale-out process.

- C. Adjust the CPU threshold set for the Autoscaling scale-in and scale-out process.

- D. Adjust the Memory threshold set forthe Autoscaling scale-in and scale-out process.

Answer: AB

Explanation:

The Auto Scaling cooldown period is a configurable setting for your Auto Scaling group that helps to ensure that Auto Scaling doesn't launch or terminate additional instances before the previous scaling activity takes effect.

For more information on the cool down period, please refer to the below link:

• http://docs^ws.a mazon.com/autoscaling/latest/userguide/Cooldown.html

Also it is better to monitor the application based on a key feature and then trigger the scale-in and scale-out feature accordingly. In the question, there is no mention of CPU or memory causing the issue.

NEW QUESTION 13

You are in charge of designing a number of Cloudformation templates for your organization. You are required to make changes to stack resources every now and then based on the requirement. How can you check the impact of the change to resources in a cloudformation stack before deploying changes to the stack?

- A. Thereis no way to control thi

- B. You need to check for the impact beforehand.

- C. UseCloudformation change sets to check for the impact to the changes.

- D. UseCloudformation Stack Policies to check for the impact to the changes.

- E. UseCloudformation Rolling Updates to check for the impact to the changes.

Answer: B

Explanation:

The AWS Documentation mentions

When you need to update a stack, understanding how your changes will affect running resources before you implement them can help you update stacks with confidence. Change sets allow you to preview how proposed changes to a stack might impact your running resources, for example, whether your changes will delete or replace any critical resources, AWS CloudFormation makes the changes to your stack only when you decide to execute the change set, allowing you to decide whether to proceed with your proposed changes or explore other changes by creating another change set. You can create and manage change sets using the AWS

CloudFormation console, AWS CLI, or AWS CloudFormation API.

For more information on Cloudformation change sets, please visit the below url http://docs.aws.amazon.com/AWSCIoudFormation/latest/UserGuide/using-cfn-updating-stacks-changesets.html

NEW QUESTION 14

Which of the following service can be used to provision ECS Cluster containing following components in an automated way:

1) Application Load Balancer for distributing traffic among various task instances running in EC2 Instances

2) Single task instance on each EC2 running as part of auto scaling group

3) Ability to support various types of deployment strategies

- A. SAM

- B. Opswork

- C. Elastic beanstalk

- D. CodeCommit

Answer: C

Explanation:

You can create docker environments that support multiple containers per Amazon CC2 instance with multi-container Docker platform for Elastic Beanstalk-Elastic Beanstalk uses Amazon Elastic Container Service (Amazon CCS) to coordinate container deployments to multi-container Docker environments. Amazon CCS provides tools to manage a cluster of instances running Docker containers. Elastic Beanstalk takes care of Amazon CCS tasks including cluster creation, task definition, and execution Please refer to the below AWS documentation: https://docs.aws.amazon.com/elasticbeanstalk/latest/dg/create_deploy_docker_ecs.html

NEW QUESTION 15

Your company has a set of resources hosted in AWS. Your IT Supervisor is concerned with the costs being incurred by the resources running in AWS and wants to optimize on the costs as much as possible. Which of the following ways could help achieve this efficiently? Choose 2 answers from the options given below.

- A. Create Cloudwatch alarms to monitor underutilized resources and either shutdown or terminate resources which are not required.

- B. Use the Trusted Advisor to see underutilized resources

- C. Create a script which monitors all the running resources and calculates the costs accordingl

- D. The analyze those resources accordingly and see which can be optimized.

- E. Create Cloudwatch logs to monitor underutilized resources and either shutdown or terminate resources which are not required.

Answer: AB

Explanation:

You can use Cloudwatch alarms to see if resources are below a threshold for long periods of time. If so you can take the decision to either stop them or to terminate the resources.

For more information on Cloudwatch alarms, please visit the below URL:

• <http://docs.aws.amazon.com/AmazonCloudWatch/latest/monitoring/Ala rmThatSendsCmail.html>

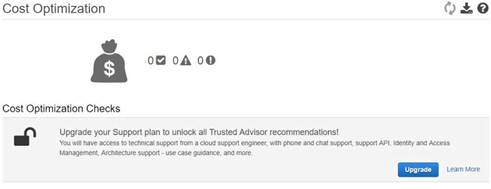

In the Trusted Advisor, when you enable the Cost optimization section, you will get all sorts of checks which can be used to optimize the costs of your AWS resources.

For more information on the Trusted Advisor, please visit the below U RL:

• https://aws.amazon.com/premiumsupport/trustedadvisor/

NEW QUESTION 16

You are using Jenkins as your continuous integration systems for the application hosted in AWS. The builds are then placed on newly launched EC2 Instances. You want to ensure that the overall cost of the entire continuous integration and deployment pipeline is minimized. Which of the below options would meet these requirements? Choose 2 answers from the options given below

- A. Ensurethat all build tests are conducted using Jenkins before deploying the build tonewly launched EC2 Instances.

- B. Ensurethat all build tests are conducted on the newly launched EC2 Instances.

- C. Ensurethe Instances are launched only when the build tests are completed.

- D. Ensurethe Instances are created beforehand for faster turnaround time for theapplication builds to be placed.

Answer: AC

Explanation:

To ensure low cost, one can carry out the build tests on the Jenkins server itself. Once the build tests are completed, the build can then be transferred onto newly launched CC2 Instances.

For more information on AWS and Jenkins, please visit the below URL:

• https://aws.amazon.com/getting-started/projects/setup-jenkins-build-server/

Option D is incorrect. It would be right choice in case the requirement is to get better speed.

NEW QUESTION 17

You work for a company that has multiple applications which are very different and built on different programming languages. How can you deploy applications as quickly as possible?

- A. Develop each app in one Docker container and deploy using ElasticBeanstalk

- B. Create a Lambda function deployment package consisting of code and any dependencies

- C. Develop each app in a separate Docker container and deploy using Elastic Beanstalk V

- D. Develop each app in a separate Docker containers and deploy using CloudFormation

Answer: C

Explanation:

Elastic Beanstalk supports the deployment of web applications from Docker containers. With Docker containers, you can define your own runtime environment. You

can choose your own platform, programming language, and any application dependencies (such as package managers or tools), that aren't supported by other

platforms. Docker containers are self-contained and include all the configuration information and software your web application requires to run.

Option A is an efficient way to use Docker. The entire idea of Docker is that you have a separate environment for various applications.

Option B is ideally used to running code and not packaging the applications and dependencies Option D is not ideal deploying Docker containers using Cloudformation

For more information on Docker and Clastic Beanstalk, please visit the below URL:

◆ http://docs.aws.a mazon.com/elasticbeanstalk/latest/dg/create_deploy_docker.html

NEW QUESTION 18

You have a web application hosted on EC2 instances. There are application changes which happen to the web application on a quarterly basis. Which of the following are example of Blue Green deployments which can be applied to the application? Choose 2 answers from the options given below

- A. Deploythe application to an elastic beanstalk environmen

- B. Have a secondary elasticbeanstalk environment in place with the updated application cod

- C. Use the swapURL's feature to switch onto the new environment.

- D. Placethe EC2 instances behind an EL

- E. Have a secondary environment with EC2lnstances and ELB in another regio

- F. Use Route53 with geo-location to routerequests and switch over to the secondary environment.

- G. Deploythe application using Opswork stack

- H. Have a secondary stack for the newapplication deploymen

- I. Use Route53 to switch over to the new stack for the newapplication update.

- J. Deploythe application to an elastic beanstalk environmen

- K. Use the Rolling updatesfeature to perform a Blue Green deployment.

Answer: AC

Explanation:

The AWS Documentation mentions the following

AWS Elastic Beanstalk is a fast and simple way to get an application up and running on AWS.6 It's perfect for developers who want to deploy code without worrying about managing the underlying infrastructure. Elastic Beanstalk supports Auto Scaling and Elastic Load Balancing, both of which enable blue/green deployment.

Elastic Beanstalk makes it easy to run multiple versions of your application and provides capabilities to swap the environment URLs, facilitating blue/green deployment.

AWS OpsWorks is a configuration management service based on Chef that allows customers to deploy and manage application stacks on AWS.7 Customers can specify resource and application configuration, and deploy and monitor running resources. OpsWorks simplifies cloning entire stacks when you're preparing blue/green environments.

For more information on Blue Green deployments, please refer to the below link:

• https://dO3wsstatic.com/whitepapers/AWS_Blue_Green_Deployments.pdf

NEW QUESTION 19

A company has developed a Ruby on Rails content management platform. Currently, OpsWorks with several stacks for dev, staging, and production is being used to deploy and manage the application. Now the company wants to start using Python instead of Ruby. How should the company manage the new deployment? Choose the correct answer from the options below

- A. Update the existing stack with Python application code and deploy the application using the deploy life-cycle action to implement the application code.

- B. Create a new stack that contains a new layer with the Python cod

- C. To cut over to the new stack the company should consider using Blue/Green deployment

- D. Create a new stack that contains the Python application code and manage separate deployments of the application via the secondary stack using the deploy lifecycle action to implement the application code.

- E. Create a new stack that contains the Python application code and manages separate deployments of the application via the secondary stack.

Answer: B

Explanation:

Blue/green deployment is a technique for releasing applications by shifting traffic between two identical environments running different versions of the application.

Blue/green deployments can mitigate common risks associated with deploying software, such as downtime and rollback capability

Please find the below link on a white paper for blue green deployments

• https://d03wsstatic.com/whitepapers/AWS_Blue_Green_Deployments.pdf

NEW QUESTION 20

Your development team is using an Elastic beanstalk environment. After a week, the environment was torn down and a new one was created. When the development team tried to access the data on the older environment, it was not available. Why is this the case?

- A. Thisis because the underlying EC2 Instances are created with encrypted storage andcannot be accessed once the environment has been terminated.

- B. Thisis because the underlying EC2 Instances are created with IOPS volumes andcannot be accessed once the environment has been terminated.

- C. Thisis because before the environment termination, Elastic beanstalk copies thedata to DynamoDB, and hence the data is not present in the EBS volumes

- D. Thisis because the underlying EC2 Instances are created with no persistent localstorage

Answer: D

Explanation:

The AWS documentation mentions the following

Clastic Beanstalk applications run on Amazon CC2 instances that have no persistent local storage.

When the Amazon CC2 instances terminate, the local file system is not saved, and new Amazon CC2 instances start with a default file system. You should design your application to store data in a persistent data source.

For more information on Elastic beanstalk design concepts, please refer to the below link: http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/concepts.concepts.design.htmI

NEW QUESTION 21

Of the 6 available sections on a Cloud Formation template (Template Description Declaration, Template Format Version Declaration, Parameters, Resources, Mappings, Outputs), which is the only one required for a CloudFormation template to be accepted? Choose an answer from the options below

- A. Parameters

- B. Template Declaration

- C. Mappings

- D. Resources

Answer: D

Explanation:

If you refer to the documentation, you will see that Resources is the only mandatory field

Specifies the stack resources and their properties, such as an Amazon Elastic Compute Cloud instance or an Amazon Simple Storage Service bucket.

For more information on cloudformation templates, please refer to the below link:

• http://docs.aws.amazon.com/AWSCIoudFormation/latest/UserGuide/template-anatomy.html

NEW QUESTION 22

You are creating an application which stores extremely sensitive financial information. All information in the system must be encrypted at rest and in transit. Which of these is a violation of this policy?

- A. ELB SSL termination.

- B. ELB Using Proxy Protocol v1.

- C. CloudFront Viewer Protocol Policy set to HTTPS redirection.

- D. Telling S3 to use AES256 on the server-side.

Answer: A

Explanation:

If you use SSL termination, your servers will always get non-secure connections and will never know whether users used a more secure channel or not. If you are using Elastic beanstalk to configure the ELB, you can use the below article to ensure end to end encryption. http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/configuring-https-endtoend.html

NEW QUESTION 23

You have a legacy application running that uses an m4.large instance size and cannot scale with Auto Scaling, but only has peak performance 5% of the time. This is a huge waste of resources and money so your Senior Technical Manager has set you the task of trying to reduce costs while still keeping the legacy application running as it should. Which of the following would best accomplish the task your manager has set you? Choose the correct answer from the options below

- A. Use a T2burstable performance instance.

- B. Use a C4.large instance with enhanced networking.

- C. Use two t2.nano instances that have single Root I/O Visualization.

- D. Use t2.nano instance and add spot instances when they are required.

Answer: A

Explanation:

The aws documentation clearly indicates using T2 CC2 instance types for those instances which don't use CPU that often.

T2

T2 instances are Burstable Performance Instances that provide a baseline level of CPU performance with the ability to burst above the baseline.

T2 Unlimited instances can sustain high CPU performance for as long as a workload needs it. For most general-purpose workloads, T2 Unlimited instances will provide ample performance without any additional charges. If the instance needs to run at higher CPU utilization for a prolonged period, it can also do so at a flat additional charge of 5 cents per vCPU-hour.

The baseline performance and ability to burst are governed by CPU Credits. T2 instances receive CPU Credits continuously at a set rate depending on the instance size, accumulating CPU Credits when they are idle, and consuming CPU credits when they are active. T2 instances are a good choice for a variety of general-purpose workloads including micro-services, low-latency interactive applications, small and medium databases, virtual desktops, development, build and stage environments, code repositories, and product prototypes. For more information see Burstable Performance Instances.

For more information on F_C2 instance types please see the below link: https://aws.amazon.com/ec2/instance-types/

NEW QUESTION 24

Which of the following services along with Cloudformation helps in building a Continuous Delivery release practice

- A. AWSConfig

- B. AWSLambda

- C. AWSCIoudtrail

- D. AWSCodePipeline

Answer: D

Explanation:

The AWS Documentation mentions

Continuous delivery is a release practice in which code changes are automatically built, tested, and prepared for release to production. With AWS Cloud Formation

and AWS CodePipeline, you can use continuous delivery to automatically build and test changes to your AWS Cloud Formation templates before promoting them to

production stacks. This release process lets you rapidly and reliably make changes to your AWS infrastructure.

For more information on Continuous Delivery, please visit the below URL: http://docs.aws.amazon.com/AWSCIoudFormation/latest/UserGuide/continuous-delivery-codepipeline.html

NEW QUESTION 25

Your CTO has asked you to make sure that you know what all users of your AWS account are doing to change resources at all times. She wants a report of who is doing what over time, reported to her once per week, for as broad a resource type group as possible. How should you do this?

- A. Create a global AWS CloudTrail Trai

- B. Configure a script to aggregate the log data delivered to S3 once per week and deliver this to the CTO.

- C. Use CloudWatch Events Rules with an SNS topic subscribed to all AWS API call

- D. Subscribe the CTO to an email type delivery on this SNS Topic.

- E. Use AWS 1AM credential reports to deliver a CSV of all uses of 1AM UserTokens overtime to the CTO.

- F. Use AWS Config with an SNS subscription on a Lambda, and insert these changes over time into a DynamoDB tabl

- G. Generate reports based on the contents of this table.

Answer: A

Explanation:

AWS CloudTrail is an AWS service that helps you enable governance, compliance, and operational and risk auditing of your AWS account. Actions taken by a user, role, or an AWS service are recorded as events in CloudTrail. Events include actions taken in the AWS Management Console, AWS Command Line Interface, and AWS SDKs and APIs.

Visibility into your AWS account activity is a key aspect of security and operational best practices. You can use CloudTrail to view, search, download, archive, analyze, and respond to account activity across your AWS infrastructure. You can identify who or what took which action, what resources were acted upon, when the event occurred, and other details to help you analyze and respond to activity in your AWS account.

For more information on Cloudtrail, please visit the below URL:

• http://docs.aws.amazon.com/awscloudtrail/latest/userguide/cloudtrail-user-guide.html

NEW QUESTION 26

......

Thanks for reading the newest DOP-C01 exam dumps! We recommend you to try the PREMIUM Certleader DOP-C01 dumps in VCE and PDF here: https://www.certleader.com/DOP-C01-dumps.html (116 Q&As Dumps)